Chapter 7: Gravity Wave Measurements were Contrived through Fake Science Chapter Summary Claimed measurement of gravity waves in 2015 and again in 2016 was contrived. Supposedly, one tenth of an atto meter in a 4 kilometer path was measured. The detector was a heavy pendulum which was said to have moved slightly by a gravity wave caused by two black holes colliding 1.3 billion light years away. A third claimed measurement of gravity waves occurred in early 2017. Two black holes supposedly collided 3 billion light years away. If the bump actually showed up on the mirrors for one second, was someone looking through a telescope at the collision for that one second? The bump on the mirrors does not indicate the distance. ◆ ◆ ◆ ◆ ◆ ◆ ◆ ◆ ◆ ◆ ◆ A motion of 0.1 atto meters by a mirror was said to be measured by reflecting a laser beam off it. That distance is 2.87 billion times smaller than the distance between iron atoms in steel. It's 100 million times smaller than the vibration of atoms, which is a noise to signal ratio of 100 million to one. The distance variation being measured is 10 trillion times smaller than the wavelength of the laser beam detecting it. Such a miniscule change in wave position could not really be detected.

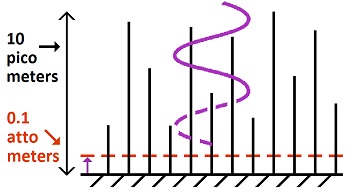

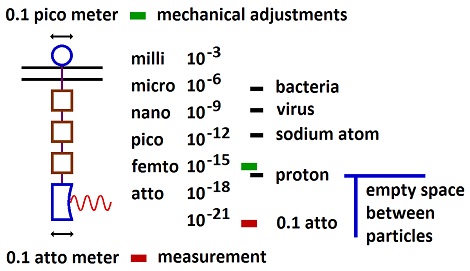

Physicists can't stretch this technology by factors of millions, billions and trillions. A factor of ten over commercial grade technology is where the boundaries are at. Wizardry doesn't remove the overriding motion noise of vibrating molecules. With atoms vibrating at 10 pico meters, with harmonic, random and spurious effects, refinements are not going to occur at 0.1 atto meter, which is 100 million times smaller. Scale of Measurement

None of the claims will actually work at the 0.1 atto meter level of the supposed signal.

1. One ten trillionth of laser light intensity was supposedly the variation being measured through interferometry. 2. The photodetector would produce 10 million to one noise to signal ratio. 3. Temperature change of 18 trillionths °C per second swamps the signal. 4. Inductive force for control of motion is not a control mechanism. 5. Motion control would have to look through the measurement detector, which would remove the signal. 6. Atomic vibrations are 100 million times greater than the signal. That's a noise to signal ratio of 100 million to one.

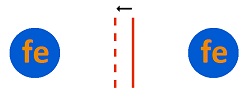

Space Between Iron Atoms Notice that the moving, controlling and measuring is occurring in less space than the distance between atoms. The measurement is 2.87 billion times smaller than an iron atom (287 pico meters diameter). (287x10-12 ÷ 0.1x10-18 = 2.87x109)

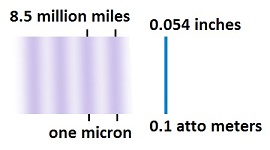

This image shows motion that is one tenth of the distance between iron atoms. Nothing can be controlled at that small of a distance. Now move the iron atoms 287 million times farther apart, or make the movement 287 million times smaller. Nothing resembling it can occur. Molecular vibrations occur at 10 pico meters for solids (2). That's 100 million times greater than the measurement. Nothing can be measured at a noise to signal ratio of 100 million to one.

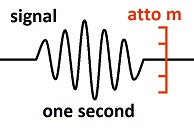

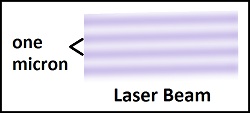

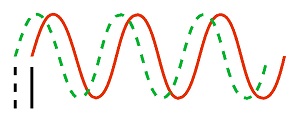

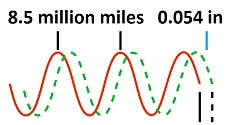

This image shows a shift in the laser beam of 20% of one wavelength. It would create a 20% change in light intensity on the detector of the interferometer. Now reduce it by a factor of two trillion. The result is claimed to be how much shift in the laser beam and change in light intensity was detected. The signal changing the distance and light intensity by a factor of one in 10 trillion was supposedly detected. The description said 280 reflections were used to increase sensitivity. Dividing one half trillion by 280 is 1.8 billion. It means the change in distance and light intensity would be 1.8 billion times smaller than shown in this image. No wave is pure enough, and no detector is sensitive enough, for such a miniscule effect. The claim of multiple reflections shows the underlying fallacy of the entire subject. It's the assumption that numbers can be multiplied out infinitely. When the noise to signal ratio is 100 million to one, the noise gets multiplied out more than the signal does. Every event increases the noise without purifying the signal. This problem is obvious in electronics, where the noise cannot be imagined away. Physicists do a lot of pretending where their critics cannot measure and prove what they are doing.

Atoms vibrate at 10 pico meters (10-11 meters) (2. Wikipedia), which is 100 million times larger than the measurement of 0.1 atto meters. That's a noise to signal ratio of 100 million to one. Nothing can be measured when noise is greater than the measurement.

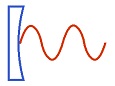

This image shows 10 pico meters of atomic vibrations as noise motion. The laser wave must reflect off the surface which is in constant, random motion at 100 million times the distance change being measured. (That's 10 picos divided by 0.1 attos equals 100 million.) The vibrating atoms would create a diffusion of the reflecting wave by 10 pico meters, if the wave were perfect to start with. But of course it would have any amount of undetermined diffusion to start with. Physicists would respond with one of their common assumptions—that a measurement will only see the average and can therefore read through any amount of something that averages out. The fallacy is that the average does not stay consistent; it changes at the rate of the noise motion which includes harmonic and spurious changes including "popcorn" noise at all frequencies between the 83 femto seconds of each vibration and the one second of measurement. The variations in noise motion would create a blur over the distance range of 10 pico meters totally wiping out the measurement.

The detecting instrument would have been nonfunctional. The gravity wave was supposedly traveling at the speed of light. A detecting mirror had a path length of 4 kilometers. Length means distance between two points. The other point was a "beam splitter." Light travels 4 km in 13 micro seconds. This means the second point (beam splitter) would be moving the same way as the mirror with a time lag of 13 µS (If the event were aligned exactly upon the horizon). The event being measured was said to occur for one second (too convenient for reality without contrivance. Can you believe two black holes colliding for one second? Actual events of such magnitude would have strung noise for light years.). The one second was split into several sine waves. The effect being measured would only produce a result for 13 micro seconds for each change in direction of motion, since the second point would track the first point after 13 µs of difference. The original image of the signal (which appears to have been removed from the web site for LIGO) showed about ten sign waves, when including the tiny ones on the ends. This means each sign wave lasted about one tenth of a second. A measurement distance change could only occur for 13 micro seconds. Dividing 0.1 seconds by 13 µS equals 7,700. This means only 0.00013 (0.013%) of each sign wave would have showed up on the instrument. That small of a part of a sine wave would not have been distinguishable as a sign wave. And the wave shape would have been spikes for each change in direction, not sine waves.

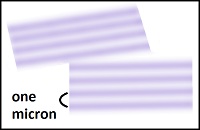

The interferometry method used is too hypothetical, while realities would overwhelm the results. The laser wavelength was 1064 nano meters (rounds to one micron), which is 10 trillion times larger than the distance being measured. This ratio spreads the variation distance out by a factor of 10 trillion and dilutes the light by that much. Total interference is required to remove background light. To get this result requires matching distances of two paths to exactness within one tenth of an atto meter. Such adjustments would be impossible regardless of noise removal. Not only is 10 trillionth of the laser light measured, the noise must be removed to 10 trillionth of the laser wavelength, and the positional difference in path lengths must be controlled to 10 trillionth of the wavelength of the laser bean after traveling over 4 kilometers and being reflected 280 times for more than a thousand km of total distance. The noise and adjustment errors are multiplied by 280 with the reflections.

Interferometry means two light waves interfere with each other to create an effect with light. When the waves precisely overlap, they cancel each other and become dark. Any change in the alignment, and some light appears. Change in distance the light travels will misalign the waves and create some light. The amount of light depends upon the amount of change in alignment. The measurement is in the misalignment of two waves. A variation of 0.1 atto meter is said to be detected. The actual distance that the waves travel is of no relevance. It is not measured or analyzed. Only the offset of waves is detected. The light waves are produced by laser beams which have a wavelength of approximately one micron (one micro meter). The change in distance said to be detected is 0.1 atto meter. That's 10 trillion times as much distance in one wave as the detected distance change. The laser beam is reflected 280 times, which increases the detection distance change to 28 atto meters. That's still 36 billion times less detection distance than the size of one wave of the laser beam. Such a miniscule influence over the laser beam would not really be detectable. One problem is diffusion of light. Light cannot be created perfect or made perfect after it is created. The imperfections create diffusion. Then the reflection off imperfect mirrors 280 times adds more diffusion. Beam splitters would require heterogeneity of matter and light to spit a light wave. The light detector is said to be a photo diode. The problem with silicone based devices is that they produce a lot of current noise, thermal noise, popcorn noise and drift. The silicone has to be spiked with dopants to create a diode, which creates a lot of variations. Since obfuscation is the standard, a vacuum tube detector, called a photomultiplier, might be used. These puppies create super amplification, which should be good for a lot of contrived magic, except that the more the amplification, the less the resolution, while the degree of resolution is the problem in picking the signal out of 10 trillion times as much background light. Interferometry is not a realistic procedure for highly demanding measurements due to the crudeness of light waves. At the macro scale, telescopes gathering light create the impression of high precision, but this is due to the accumulation of a large number of waves. At the micro scale, individual waves are used, and they are too diffuse and imperfect for high precision. But physicists exploit the vagaries to create the desired obfuscation and ability to contrive without accountability.

The mechanical reduction of noise motion is said to leave 0.1 pico meter of noise (later changed to 0.2 pico meters), and a pendulum effect reduces the noise motion by another factor of a million to get to the 0.1 atto meter of measurement. If the mechanical noise reduction cannot function below 0.1 pico meter, then the path length cannot be aligned to 0.1 atto meter for interferometry. The interferometry requires alignment of the two paths within the measurement distance of 0.1 atto meters, which is beyond the sensitivity of the claimed mechanical motion used to remove noise—a self-contradiction. All noise which does not average zero motion will not be removed by the pendulum. Earth noise will not average around zero motion. Mechanical control cannot move the pendulum to zero motion below it's precision of 0.1 pico meters (not to mention the fact that the claimed induction force for mechanical control is not a control mechanism.) It means the pendulum is constantly moving. Removing noise motion with the pendulum effect cannot remove molecular vibrations. Atomic vibrations for solids are said, on Wikipedia, to typically be 10 pico meters (10-11 m) (2). That means atoms moving at 100 million times greater distance than the measurement. Nothing can be measured through a noise to signal ratio of 100 million to one. This motion includes the reflective surface causing it to look the same as the signal. Such motions include harmonics with random and spurious effects. These noise motions are 100 times greater than the claimed mechanical control over noise motion at 0.1 pico meters in addition to 100 million times greater than the signal motion.

If the reflecting mirrors are made of silica 1 cm thick, and the temperature changes by more than 18 trillionths of a degree centigrade per second, it wipes out the measurement. (1x10-19 ÷ 0.01m x 5.5x10-7 = 18x10-12) (The coefficient of thermal expansion for silica is 5.5x10-7/°C) A laser beam supposedly adds heat to the surface to prevent temperature change. Absorbed by what in transparent silica with a reflective coating? The mirrors are designed to not absorb the measuring beam, and they are going to absorb a heating beam? Temperatures are not uniform when adding heat of such miniscule amounts through such thick material. There is no way to cool the mirrors. Any cooling would be unstable, imprecise and nonuniform. There is no air for controlling temperature, as the environment is high vacuum. All five points that the measuring wave touches have to be controlled for temperature and noise including the starting point and end point. The temperature of the detector cannot be controlled with a radiation wave, because it absorbs radiation. Controlling temperature requires measuring what is being controlled. There is no way to measure temperature change to trillionths of a degree centigrade. There is no such thing as stable temperature, just as there is no such thing as water in a river which is not moving. Temperature is more fluid than water. It is always increasing or decreasing. Part of the reason is because heat is always being added or removed through radiation. All mass is constantly radiating in proportion to its temperature. Another cause of temperature change is heterogeneous materials, which cause variations in rates of temperature movement and radiation.

The claimed mechanical removal of noise motion to one tenth of a pico meter is a contradiction of these facts: The mechanism has to move about 80 kilograms (176 lb) of mass, which is the weight of 4 objects, 20 kg each, which hang as a pendulum. All mechanical devices require some free space where motion occurs. In other words, if joints are too tight, nothing moves. An iron atom (crystalline) is 287 pico meter across, which is 2,870 times larger than the claimed, controlled distance of 0.1 pico meter. How much free space does it require? Certainly more than thermal expansion. The coefficient of thermal expansion for iron is 11.8x10-6 per °C. A one centimeter wide collar or shaft will expand 118 nano meters over 1°C (11.8x10-6 x .01m = 118x10-9) requiring at least that much clearance. This means its motion is not defined over at least that much distance. This distance is 1.8 million times greater than the claimed 0.1 pico meters of control for noise motion removal (118x10-9 ÷ 0.1x10-12 = 1.8 million). It means there is at least 1.8 million times as much undefined distance as claimed control distance, just for the removal of noise motion, and a million times more discrepancy in claiming to remove the difference between two paths down to 0.1 atto meter for interferometry.

The descriptive material now says that positional control used inductive coil control like a speaker, implicitly meaning there were no levers or gears that require mechanical free space. Motion cannot be precisely controlled with such coil induction. Inductive force without levers or gears does not produce a definable position. It's the same thing as pulling with a spring. Solid connections are needed to define positions. The spring-like inductive force must overcome friction and inertia of acceleration, which both vary, and the position varies with the counter-force. It's important to know what the problem of mechanical free space is, because it would be the only reason why inductive force was said to be used. No one uses inductive force without mechanics for position control anywhere in technology, because it is not a controllable mechanism. The only thing inductive force without complex parts can be used for is two position solenoids or switches. On top of that, the use of inductive force for motion control did actually remove the problem of mechanical free space, because there must be a lot of free space around an inductor. Even though the direction is different, being parallel to the motion, there are still slight angular variations which wipe out the control over distance. But this effect is trivial compared to the ridiculousness of using inductive force to control position. If a 10 centimeter rod within the inductor rotated 100 pico meters angular on each end (5 cm each from center), the end would shorten the distance by the claimed measurement of 0.1 atto meters. Not only is 100 pico meters not enough free space, the edges are going to rub and create friction with any amount of free space. But an inductor does not produce a definable position to reference to. If the claim were that the inductor moves the mirror until the light goes dark on the photodetector, the adjustment would be removing the signal. But the inductor could not produce enough precision to do anything. It would jerk back and forth endlessly without matching any feedback requirements. When physicists claim to use inductive force for the most precise measurement ever claimed, it means they are disregarding precision in exchange for a describable mechanism. It's physics by word salad instead of laws of nature.

Another problem is measurement for the feedback element of control. There is no method of determining what the desired position should be other than looking at the end result, which is the photodetector for the measurement. Correcting position at a higher frequency than the measurement removes the measurement. All alignment through the measuring device must be at such a low frequency that measurement occurs between alignment events. Since the measurement occurred over one second of time, alignment would have to not be occurring during that one second of time. Yet the claim is that adjustments were made at a frequency of 16,384 times per second. The assumption is that a little noise motion with the mechanical device can be tolerated, and its precision is 0.1 pico meters, while the pendulum effect removes the rest of the noise. That type of logic is for visible effects in the millimeter range, not for a tenth of a quadrillionth (10-16) less than that. What happens is that the mechanical position control mechanism must look for a light change in the photodetector to see if its position needs to be corrected. There is no other method of feedback control at 0.1 pico meters, which is 2,870 times smaller than the distance between the iron atoms in the steel. Accelerometers don't work at that level. So the mechanical position control device is trying to remove changes in light intensity at 16,384 times per second, while the signal is trying to produce one part per 10 trillion change in the light intensity for one second. That process breaks down and doesn't work. The mechanical control over motion cannot respond to light changes in the photodetector, if it is only producing crude effects which are removed by the pendulum. Yet there is no other form of feedback information but changes in light in the photodetector. Descriptions don't say what type of feedback mechanism is used for position control, but there is no position measuring mechanism that functions at thousandths of the distance between iron atoms. The mechanical position controlling mechanism must look at the signal on the photo detector to match waves for interferometry. If the scale was more normal, no match of waves would be required for interferometry, and the signal could read through any level of light that was stable, but not at 0.1 atto meter over 4 kilometers of path length. If accelerometers were removing motion independent of the output of the photodetector, every device would have to have less than 0.1 atto meter of absolute value position change, which includes all mirrors, splitters and the detector. There is no technology that works at that level.

The motion is detected by a laser beam which bounces off a vibrating mirror. The laser beam wavelength is ten trillion times greater than the motion being detected. That's one micron (rounded) wavelength for the laser and 0.1 atto meter for the motion being detected. (1x10-6 ÷ 0.1x10-18 = 10x1012) If then, the motion is the ink line between New York and Los Angeles, the wavelength of the laser beam is 36 times the distance to the moon. (0.054 in x 10x1012 ÷ 12 in ÷ 5280 mi = 8.52x106 mi ÷ 240,000 mi = 36) The claim is that a laser beam equivalent to 36 times the distance to the moon in wavelength (one tenth the distance to the sun) would detect a motion equivalent to a vibrating ink line. The diffusion and random effects in the laser beam (noise) would cause such a small amount of motion to disappear in the light.

Limitations on Photodetector Even if the laser beam were perfect and delivered the one tenth of a trillionth variation in its intensity, no detector can respond to such miniscule effects, because detectors have their own noise effects, which are parts per thousand for solid state and parts per million for vacuum tubes. At one part per million noise in the detector, the miss would be a factor of 10 million. (10x1012 ÷ 1x106 = 10x106) That's one part per ten trillion resolution demand being met with one part per million sensitivity.

Here's a concept error that shows the fakery and stupidity of the physicists: Physicists went to a lot of trouble to create a path length of 4 kilometers (about 2.5 miles) for the laser beam which reflected off a mirror for measuring gravity waves. They say in their presentation at LIGO that the long path length takes longer for the light to get back, which gives them more time in the measurement. The measurement is not based on time. It is based on a micro distance variation of 0.1 atto meters. A better path length would have been a meter or two. There was a lot of expense in creating the most perfect vacuum possible in a tube 4 km long. Their error in thought came out of the Mickelson-Morley experiment of the 1890s, where the purpose was to determine if light moved at a different velocity parallel to the earth's motion relative to perpendicular to it. A greater path length would show more difference. But the LIGO experiment was not a study of the light. The laser light was only the yardstick, not the question being studied. This error not only shows the stupidity of the physicists, it shows that they never actually went through a real process which produced a real result. A real analysis would have clued them into the error. It was only the total contrivance that allowed them to miss such an error. One of the things this error shows is that physicists are total idiots. You might think their complex math shows they are geniuses, but the opposite is true. The math is only a snow job with no relationship to objective reality. Could anyone really believe that their math shows the existence of worm holes which are going to allow them to get to the next planet without the physical limitations of the laws of physics? Physicists reduce everything to absurd math, because it's like a language which cannot be decoded. Physicists cannot check each other's math, because it doesn't reverse engineer due to the unlimited variations in the use of symbols. So they replicate a few confusing math equations and contrive the results out of thin air. Out of it they get such absurdities as the Stefan-Boltzmann constant which shows 20-50 times too much radiation being emitted from matter at normal temperatures, as explained in the global warming section of this web site. 1. The project is described at https://www.ligo.caltech.edu/page/vibration-isolation. 2. Atomic Vibrations (10 pico meters), Wikipedia. https://en.m.wikipedia.org/wiki/Atom_vibrations (The 0.1 pico meter control over mechanical motion was modified on the web site for LIGO to 0.2 pico meter. This and other trivial changes on the web site are irrelevant to the criticism. The trivia is now scrambled and self-contradictory, so there is no need in trying to adapt to it.) |