Chapter 3: Global Warming Contrived Through Fake Science  Chapter Summary Absorbed radiation is re-emitted in 83 femto seconds average, which means there is no such thing as trapping heat in the atmosphere. Without trapping heat, there is no such thing as a greenhouse effect. Since heat isn't trapped, it can't spread to the surrounding 2,500 air molecules. Without significant dilution, each CO2 molecule would have to increase 2,500°C to heat the total air 1°C, an impossibility. If all 0.7°C heating said to be caused by humans went into the surface of the oceans to a depth of 100 meters, the temperature increase would be 0.025°C, and none would be left in the air. It means hurricanes are not caused by global warming, nor is coral bleaching.  There are two reasons why the concept of global warming is ridiculous. One is that only a miniscule amount of energy is involved. The second is that even the tiny amount disappears in femto seconds and does not accumulate. Very little energy leaves the surface of the earth as radiation. It's about 1-3%. Climatologists claim it is 79%. They use an erroneous Stefan-Boltzmann constant to get that result. Even though they know 79% is ridiculous, they can't reduce the number, as they have tried to do but failed because of the erroneous Stefan-Boltzmann constant. If the radiation is 2% while said to be 79%, the miss is a factor of 40 on whatever global warming does. If you blow on hot soup for 8 seconds, it would do the same thing as letting it sit for 10 seconds, if 80% of the heat loss were due to radiation and there were only a 20% gain from blowing. Cooling fans would not exist if radiation were doing that much cooling. In cooling electronic components, various techniques are used, and radiation is not even a relevant consideration in the process. Scientists bicker over a half of a degree (50%), while the most obvious error is a factor of 40 (4,000%). They are a bunch of incompetents and frauds. Another error/misrepresentation is saturation. It means carbon dioxide absorbs all radiation available to it in traveling 10 meters under near-surface conditions. Therefore, adding more CO2 to the atmosphere only changes the distance without increasing the heat. This number is extremely easy to measure in a laboratory, but all mention of saturation disappeared after a brief mention in the 2001 IPCC report. Somehow, radiative transfer equations made saturation disappear. A German physicists, Heinz Hug, did such a laboratory measurement and said the distance for saturation is 10 meters and indicated that others found the same. He was not allowed to publish such contradictory evidence to global warming, so he put his results on the internet. Since saturation cannot be made to disappear through mathematics, why is there no verification of the number by "peer reviewed" officials? The 2001 IPCC report said that edge frequencies are absorbed in longer distances, and they create global warming. That's like saying ants on the road determine gas mileage. The edges would have to be one thousandth of the CO2 to get to the top of the troposphere for saturation distance (10 km height divided by 10 meters is 1,000). Only less CO2 than that would be available to create global warming within the troposphere. This miniscule number of CO2 molecules cannot heat the atmosphere, because any energy they acquire disappears in 83 femto seconds on average. These numbers might have little meaning to nonscientists, but there are methods of looking at them that are easy to grasp. There would have to be some form of accumulation of heat to get miniscule quantities to add up to relevance. This is why propagandists in science concocted terminology related to greenhouses. They say "heat trapping gasses" create a "greenhouse effect." Nothing resembling such an effect is possible. Consider what a fraud this terminology is. A greenhouse uses glass or plastic to stop wind currents while radiation is allowed to go through. There is no such physical barrier in the atmosphere, and wind currents would be irrelevant. All matter emits radiation constantly, and the transparent gas of the atmosphere allows radiation to escape from every molecule, unlike an opaque solid which only allows radiation to escape from the surface. This constant emission of radiation prevents the heat picked up by CO2 from accumulating. If it can't accumulate, it does nothing, which is why propagandist focus upon a fake accumulation through a greenhouse effect. Each carbon dioxide molecule in the atmosphere is surrounded by 2,500 air molecules. With saturation being considered, only one thousandth of these are relevant, which means each of these CO2 molecules are surrounded by 2.5 million air molecules. The heat which CO2 molecules acquire cannot spread to the other air molecules, unless it can accumulate. Otherwise, it disappears as fast as it is acquired. A trace amount of energy would spread to about five nearby molecules by bumping (conduction of heat), but those molecules are also radiating. With five molecules radiating away the picked up energy it disappears awful fast. Since the energy cannot spread to the surrounding 2,495 air molecules (much less, 2.5 million) each CO2 molecule (plus its five helpers) must be 2,500 times hotter than the average temperature increase of the atmosphere. The temperature will be the average energy of all molecules in it. That means about 2,500°C for the CO2—a total impossibility. All molecules in the air must be similar in temperature, because any difference will rapidly transfer to surrounding molecules. It means an accumulation effect is absolutely necessary to get global warming, but it is a physical impossibility. Does anyone really need to know anything more about the fraud of global warming? Every molecule emits radiation due to its vibrations. Absorbed radiation by carbon dioxide is re-emitted in 83 femto seconds. A transparent gas emits more radiation than an opaque solid. The Stefan-Boltzmann constant states how much radiation is given off at any temperature. That means no accumulation of heat occurs. And it means no spreading of heat to the surrounding 2,500 air molecules occurs before the energy is radiated away. And CO2 is like a 1 watt heater in an auditorium.  A major error of global warming is not noticing that each carbon dioxide molecule is surrounded by 2,500 air molecules and has no way to spread the heat around. This dilution divides the CO2 temperature by 2,500 for the average temperature, which is no heating at all.  Conducting heat to nearby molecules by bumping increases the number of molecules radiating away the added heat, which prevents very many molecules from being heated. Climatologists claim there is 79% radiation in the energy leaving the earth's surface. A white hot light bulb cannot emit that much radiation without a vacuum environment. Reduce it to the 1-3% that it should be and global warming reduces by a factor of 40. The claimed 0.7°C caused by humans would reduce to 0.018°C, if there were such a thing. This error occurred because an erroneous Stefan-Boltzmann constant shows 20-50 times too much radiation at normal temperatures. If all 0.7°C heating said to be caused by humans went into the surface of the oceans to a depth of 100 meters, the ocean surface temperature increase would be 0.025°C, and none of the heating would be left in the air. It means hurricanes are not caused by global warming, nor is coral bleaching. It's an absolute truism that a small amount of something cannot heat a large amount of something without extreme differences, because heat dissipates, always. Four hundred parts per million carbon dioxide in the air is never going to influence the temperature of the atmosphere for this reason. More than a century ago, scientists determined that carbon dioxide saturates in the atmosphere and cannot absorb more radiation. This means CO2 absorbs all radiation available to it, so more CO2 cannot absorb more radiation. Additional CO2 only shortens the distance required for complete absorption. The saturation distance for CO2 in the near-surface atmosphere is 10 meters. But climatologists erased the whole concept of saturation by supposedly calculating how much radiation gets to the surface of the atmosphere through fake "radiative transfer equations". Making saturation disappear is an impossibility. While CO2 absorbs fingerprint radiation, it re-emits black body radiation, which means the wavelength shifts to slightly longer. One of the basic errors of climatologists is looking at a trough in the absorption spectrum and assuming it represents heat, while not realizing that the energy is re-emitted at different wavelengths, which is not evident in the absorption spectrum. So they contrive a math to fit their erroneous assumptions. Fingerprint absorption is caused by chemical bonds (electron orbits) stretching and bending, while black body radiation is caused by the whole molecule vibrating. Therefore: 1. With absorbed radiation being re-emitted in 83 femto seconds, global warming caused by humans does not exist. 2. With dilution by 2,500, global warming caused by humans does not exist. 3. With radiation divided by a factor of 40, global warming caused by humans does not exist. 4. With an impossibility of a small amount of something heating a large amount of something, global warming caused by humans does not exist. 5. With saturation, global warming caused by humans does not exist. Incompetents in science imagined global warming and contrived unreal science to get there. Climate is too complex and random for the tools of science. The science is total fakery. Real scientists do not go down the path climatologists follow pretending to measure complexities and randomness which cannot be measured. A measurement requires that all influences over the results be identified and separated from other influences. Climate has too many interacting complexities to do that. For these reasons, there is nothing resembling real science to the subject of global warming. Science is a process, not a conclusion. Conclusions come out of a dark pit in global warming science. Fake procedures are claimed, with no explanation or logical purpose. Necessary scientific standards are defied in extreme ways attempting to contrive a subject without accountability. Conservative critics of global warming have been saying that the underlying science is correct, but global warming is not occurring because of the effects of clouds. They haven't looked at the underlying science, because they don’t understand it. Their position has left society with no significant criticism of the basic science of global warming. As a result, criticism is brushed off claiming that it is disproven and needs to stop. Conversely, nothing has been shown to be correct in the science. The burden of proof should be on the scientists, not the critics. One reason for this situation is that hired scientists cannot be significantly critical without being kicked out of science or being denied grants or the ability to publish. There is a long list of scientists who met that fate. This practice alone is a major fraud upon the public. How can science (or anything else) be right, when no one is allowed to criticize it? Truth benefits from criticism. The opposition to criticism points to an unjustifiable position. Criticism is stymied by an absence of validly published research. Research publications on climatology lack the necessary descriptions of methodology. Key information needed for evaluation is omitted in an attempt to obfuscate the subject. Without proper publications, the only way criticism can be produced is to draw upon 500 years of evolved knowledge and show that the conclusions are self-contradictory impossibilities. A flat-earther is supposedly someone who can't understand that absorption of radiation means heat. Five hundred years of science has produced a lot more knowledge than that. After absorption, then what? There never was real science to global warming. Modeling is nothing resembling science. It's wishful thinking imposed upon gullible persons, like blood letting. It creates something to do for incompetents in science who don't know how to produce real science. A tiny amount of something can never heat a large amount of something under any set of conditions. Nothing resembling it is found anywhere in science. This is why parts per million greenhouse gasses cannot heat the atmosphere. Heat doesn't do that without nuclear reactions. The second law of thermodynamics says heat dissipates, all the time, everywhere, without exception. There cannot be a greenhouse effect in the atmosphere because of the second law of thermodynamics.  1. Dilution Factor Climatologists skipped over the dilution factor. Each CO2 molecule in the air would have to be 2,500°C to heat the air 1°C—an impossibility—because there are 2,500 air molecules around each CO2 molecule. If a brick building has 2,500 bricks, heating one brick won't heat the building. There cannot be greenhouse gases creating global warming for this reason. Climatologists admit that the CO2 in the air is about the same temperature as the air, as it would have to be. They are thereby implying that CO2 is a cold conduit for heat. There is no such thing as a cold conduit for heat, as thermal conductivity coefficients show. 2. White Hot Metals The amount of energy given off by the surface of the earth is claimed to be 79% radiation and 21% conduction and vaporization. White hot metals could not emit 79% radiation under atmospheric conditions. The real proportion would be 1-3% radiation. Reducing the radiation by a factor of 40 would reduce the calculated global warming by a factor of 40. 3. Trapping Heat The term "heat trapping gas" is a scientific fraud. Heat cannot be trapped, because it is too dynamic. It flows into and out of the atmosphere in femto seconds. Each vibration by molecules in the air is a wave of radiation being emitted. There are typically 83 femto seconds per bump (both directions). About five bumps removes added energy. Five bumps occur in 415 femto seconds. That's half of a pico second. A half of a pico second for holding heat is not trapping heat. The amount of heat entering from the sun during the day is the amount that leaves during the night. A miniscule amount is not going to get trapped while the rest radiates into space. The claim by some scientists that only greenhouse gases heat the atmosphere is another fraud. Most heat gets into the atmosphere through conduction, convection and evaporation. 4. Heat Capacity The air has too little heat capacity to warm ocean water or melt Arctic ice. Twelve-year-olds were supposed to learn what heat capacity is, but physicists didn't. To heat oceans with air requires a ratio of 3483 by volume for same temperatures. The heat capacity for air is 1.2 kJ/mł/°C, while for water it is 4180 kJ/mł/°C. To heat the oceans 0.2°C to a depth of 350 meters would require air losing 0.2°C to a height of 1,219 kilometers (at constant surface pressure). That's 100 atmospheres. The oceans cannot be heated by the atmosphere. Melting ice with air is even more absurd, as an additional "heat of fusion" is required, which is 334 kJ/kg, which is an additional 278,000 mł of air per °C per mł of ice. In other words, air in contact with ice sucks the heat out of the air with no effect upon the ice. With a small amount of ice and a lot of air, the cool air gets replace with warm air, but on a global scale, the replacing does not occur. It means ice melting has nothing to do with global warming. The Arctic is warming due to warm Pacific Ocean water flowing over the Bering Strait, not a miniscule air temperature increase. With the recent El Nino, the northern Pacific Ocean is warming causing warm water to flow over the Bering Strait to heat the Arctic and melt Arctic ice. 5. Temperature Measurements are Fake Not only are humans not the cause of global warming, a temperature increase did not actually occur. The temperature measurements were faked. The original data shows no temperature increase over the past 35 years at least, while contrivers lowered earlier measurements and increased recent measurements to show a false increase. Critics have been studying these fabrications for the past six years and found endless examples. Satellite measurements have shown no significant temperature increase since they began making such measurements in the late seventies. Only satellite measurements are suitable for the purpose of climatology, because they average over a wide area and cover everything, while land-based measurements cover about 10% of the earth and have no standards for cross-comparisons or uniformity. 6. Starting at the End-Point For a mechanism, climatologists used radiative transfer equations to supposedly show 3.7 watts per square meter less radiation leaving the planet than entering from the sun due to carbon dioxide. There can never be a difference between energy inflow and outflow beyond minor transitions because of equilibrium, as climatologists recognize. Yet they claim the 3.7 w/m˛ is a permanent representation of global warming upon doubling CO2. This number is supposed to result in 1°C near-surface temperature increase as the primary effect by CO2. However, watts per square meter are units of rate, while rates produce continuous change, not a fixed 1°C. The 1°C was supposedly produced by reversing the Stefan-Boltzmann constant, but reversing it is not valid. (Secondary effects supposedly triple the 1°C to 3°C.) It means climatologists started at the desired end point of 1°C and applied the Stefan-Boltzmann constant in the forward direction to the get the 3.7 w/m˛ attributed to radiative transfer equations. Radiative transfer equations cannot produce any such number, because radiation leaves from all points in the atmosphere with 15-30% going around greenhouse gases. That dynamic, combined with equilibrium, is beyond scientific quantitation. The atmosphere is cooled by radiation which goes around greenhouse gases. The amount going around doesn't matter. A gate half open won't keep in half the sheep. The cooling occurs until equilibrium is established with the amount of energy coming in from the sun. The amount of radiation going around greenhouse gases is said in Wikipedia to be 15-30%. Calculations by climatologists are based upon none going around. They calculate the amount of radiation getting to the top of the atmosphere using "radiative transfer equations." Those equations cannot account for equilibrium, which is a response to every influence upon temperature. If the amount going around were calculated without equilibrium, there would be a 100% error in the range (15-30%), while the product of the radiative transfer equations is said to have about 1% error. That product serves as the primary effect by carbon dioxide, which no one in science questions. Only secondary effects are argued. But the gate is not 15-30% open. Each molecule in the atmosphere radiates energy, with 15-30% going directly into space. That which is absorbed by greenhouse gases is re-emitted with 15-30% going into space. It means the gate is about 99.99% open. The atmosphere cools as fast as heat enters it leaving very low temperatures in equilibrium with the sun's energy. The equilibrium temperatures are very cold, because heat leaves in all directions during all hours, while it enters from one direction, half the time. The energy from the sun lands on the surface (mostly), while it leaves from the entire atmosphere at a depth of 12-15 kilometers.

There is no way to produce an equation or number for atmospheric effects. The official climatology position is not an explanation; it's fake mathematics that shows a number to represent heat trapped in the atmosphere, when there is no such thing as trapping heat in the atmosphere. The math is called Radiative Transfer Equations. The result is not a measurement; it is a series of calculations. The calculations cannot account for the smallest fraction of the effects in the atmosphere or climate. So the omissions are filled in with fake modeling. Modeling cannot account for the missing information either. Supposedly, the calculations show that 3.7 watts per square meter of radiation less than the sun's energy would show up at the top of the atmosphere upon doubling the CO2. Failing to escape the atmosphere, this radiation supposedly creates global warming.

Radiative transfer equations are a scheme for calculating global warming. It is totally impossible to produce such a number, because infinite interactions cannot be represented by one number, and the difference between energy inflow and outflow must always average zero due to equilibrium. Disequilibrium is an impossibility. Obviously, the air cools at night. Why would some of the heat be trapped while the rest cools? An impossibility. It's impossible to say what the number (3.7 w/m˛) is supposed to represent, since nothing about the subject can be reduced to such a number; and therefore, climatologists cannot produce a consistent description of what the number is supposed to mean. A difference between energy inflow and outflow seems to be the most common interpretation. And then, the number is a rate of energy addition, which cannot be converted into a temperature; yet 1°C is claimed. Supposedly, a reversal of the Stefan-Boltzmann constant converts the rate into a temperature, while reversing the constant is not valid, as it applies to a surface, and there is no surface involved.

Climatologists needed a number to represent global warming, while there is no number where there is equilibrium. Equilibrium means the same amount of radiation goes into space as enters from the sun. All large, dynamic systems keep doing something until an endpoint is reached, which is equilibrium. Things self-adjust until they all balance, which is equilibrium.

The way the earth's energy equilibrium works is that radiation carries energy into space at the same rate the sun adds energy to the earth. Most of the radiation leaves from the atmosphere, not the surface of the earth. The reason is because radiation can leave from every point in a transparent gas, but only from the surface molecules for the opaque solids on the surface. As radiation carries energy away, the atmosphere cools down. The colder it gets, the slower it radiates. It cools until it liberates as much energy as the sun adds. There is a slight delay, as energy builds up during the day and cools down at night. Equilibrium determines the temperature of the atmosphere independent of how the heat gets there. Supposedly, humans ruined the radiation equilibrium causing less energy to leave the earth than enters from the sun upon doubling the amount of CO2 in the air. It's impossible for the system to not reach equilibrium. Climatologists needed a number to represent global warming, so they said 3.7 w/m˛ of disequilibrium is the number. But there is no such thing as disequilibrium. The claimed 3.7 w/m˛ was a mathematical stunt needed to pretend that climatologists can calculate global warming. Otherwise, all numbers disappear in the process of equilibrium occurring. What are scientists if they can't find the numbers? Notice that less energy leaving the earth than entering should result in a continuous buildup of heat. Yet the result is said to be a fixed 1°C temperature increase upon doubling the amount of CO2 in the air. There is no explanation for this discrepancy. It is being overlooked for the purpose of pretending to have a mathematical analysis where there is none.

The fudge factor is the claimed primary effect. It was determined by Myhre et al, 1998 using radiative transfer equations. It says, the amount of heat added to the atmosphere (watts per square meter) equals 5.35 times the natural log of the amount of CO2 after an increase divided by the amount before. Heat increase (w/m˛) = 5.35 ln C/C0  The watts per square meter are then converted into a temperature increase by applying the Stefan-Boltzmann constant. Fake radiative transfer equations were used to show the primary effect of global warming. The supposed result of the radiative transfer equations was converted into a rudimentary fudge factor for simple calculation of the result. It says that doubling the amount of carbon dioxide in the air, even from one molecule to two molecules, would add 3.7 watts per square meter of energy to the earth. A three component equation supposedly takes care of the complex effects of carbon dioxide heating the atmosphere. The problem is not that the fudge factor is a simplified representation; the problem is that there is no science to the calculation. C over C sub zero is the increase in CO2. The question is what would happen upon doubling the amount of carbon dioxide in the air. For this the ratio of C over C sub zero is 2. So the fudge factor is 5.35 times the natural log of 2, which is 3.7 watts per square meter. Climatologists call this effect "forcing." This subject is discussed in the 2001 publication of the IPCC, chapter 6 (6.3.1) (http://www.ipcc.ch/ipccreports/tar/wg1/219.htm) (reference 1). Stefan Rahmstorf (in a book by Ernesto Zedillo, 2008) cited the IPCC saying: "Without any feedbacks, a doubling of CO2 (which amounts to a forcing of 3.7 W/m˛) would result in 1°C global warming, which is easy to calculate and is undisputed." The fudge factor is invalid, because it removes saturation. Nothing can remove saturation. Nowhere is there an explanation of how or why saturation was removed in the calculation of the fudge factor. The fudge factor is derived out of muddle which shows no other purpose than removing saturation from the effects. The derivation for the fudge factor was the use of radiative transfer equations by Myhre et al, in 1998. There was no logic in using the radiative transfer equations besides muddling the subject to remove saturation, because there was no known mechanism, and the process of calculating radiative transfer tells nothing of relevance to any suggested mechanism.

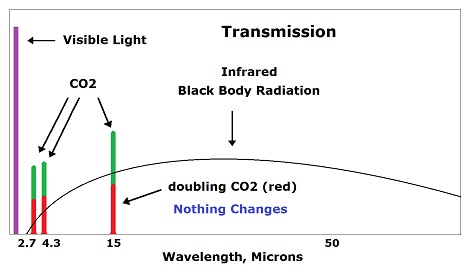

Real scientists determined a century ago, and ever since, that saturation precludes global warming by carbon dioxide. Saturation means all radiation available gets used up in a short distance 10 meters, so more CO2 cannot absorb more radiation. Fakes pretend to get around saturation but can never explain how. Supposedly, each molecule of greenhouse gas adds heat to the atmosphere by absorbing radiation. For this to occur, there must be some radiation which would otherwise go through the atmosphere, so it can be blocked. That radiation does not exist.

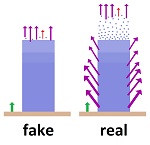

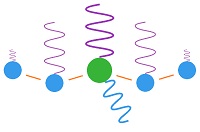

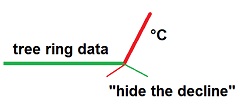

Rationalizers look for a situation represented by the image on the left, where some radiation would go through. At first, they said molecules on the shoulders of the absorption peaks are thin and do what is shown in the image on the left. But that claim would not stand up to criticism; so they switched to the upper atmosphere, where molecules are thinner. The molecules don't get thin enough until they are far beyond the top of the normal atmosphere (troposphere).

The insurmountable problem of rationalizers is that when the molecules get thin enough to allow radiation through, they spread the heat so thin that no significant temperature increase can occur. The molecules would have to be one thousandth as dense as at the surface. But that means one thousandth as much temperature change for each unit of heat. Carbon dioxide absorbs all radiation available to it in the center of its 15 µm band by the time the radiation travels 10 meters near the earth's surface (7. Heinz Hug). Where then is the non-saturation occurring? Let's say non-saturation occurs when the radiation goes beyond the top of the troposphere. The top of the troposphere averages around 17 km. The density of the shoulder molecules must be 10/17,000 times that of the center of the absorption peak. This is 0.06% as much as the center molecules (0.00059).

Consider how far apart these molecules are. A CO2 molecule is about 200 pico meters across, and nitrogen about the same. The atmosphere is now about 400 ppm CO2. This means there is 500 nano meters of distance between each CO2 molecule (1/400 ppm x 200 pm = 500 nm). The 0.00059 which does not saturate would have a spacing of 1,700 times that amount (1/0.00059 = 1,700), which is 0.85 milli meters. The CO2 molecules which absorb more radiation for supposed global warming are 0.85 mm apart. This distance is visible with the naked eye. The dilution factor for CO2 in the atmosphere is one molecule in 2,500 air molecules. But only the unsaturated molecules can absorb more CO2, and they are diluted to one part in 2.5 million air molecules (one thousandth of the CO2 molecules). A small amount of something can never heat a large amount (apart from nuclear reactions) because heat always dissipates (the second law of thermodynamics) instead of accumulates.

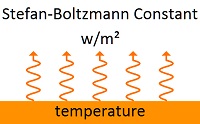

The Stefan-Boltzmann constant is used to show the amount of radiation given off by matter at a particular temperature. It shows 20-50 times too much radiation at normal temperatures.

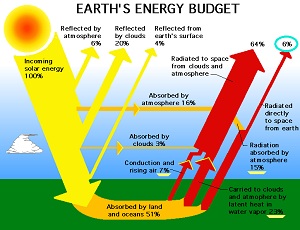

This constant implies that all matter is the same in emitting radiation. Since there are variations, adjustments are made, which are called emissivity, usually given as a percent. For example, some type of wood might give off 70% as much radiation; so it's emissivity is 70%, which is multiplied times the result of the Stefan-Boltzmann constant for that type of wood. This constant can only be applied to the surfaces of opaque solids, not gases like the atmosphere, because radiation is emitted much more readily from a transparent gas than an opaque solid. In a transparent gas, radiation can leave from every molecule, while an opaque solid only allows radiation to leave from the surface molecules. But the Stefan-Boltzmann constant is routinely applied to the atmosphere. How can an error that gross exist in physics? Overlooking such errors is standard operating procedure in physics. Usually, incongruous math conceals the absurdity. In climatology, the absurdities are more visible than usual. The reason why the Stefan-Boltzmann constant is applied to the atmosphere is because there are no significant tools available for applying science to climatology. Climatology is so complex and random that it evades scientific study. But after 120 years of failing to convince real scientists that global warming is a problem, the promoters of the cause began applying complex science to the subject. They had no real tools of science to work with, so they misapplied whatever they could find for scientific procedures. Besides using the Stefan-Boltzmann constant for atmospheric effects, they used modeling to fill in the gaps for missing information. Modeling is nothing resembling a scientific procedure, as it is not a measurement, as shown by the endless variations for results that come out of modeling. The Stefan-Boltzmann constant is this: W/m˛ = 5.670373 x 10-8 x K4 This result is the number of watts per square meter of infrared radiation supposedly given off by matter at a temperature represented by K (degrees Kelvin, which is 273 + °C). At a normal temperature of 27°C (80°F), the Stefan-Boltzmann constant without emissivity indicates 459 W/m2 being radiated. At the assumed average temperature of the earth (15°C, 59°F), it's 390 W/m2. At the freezing temperature of water (0°C, 32°F), it's 315 W/m2. On a hot day of 37°C (98°F), it's 524 W/m2. It isn't happening. Normal temperature matter is not giving off that much infrared radiation. The latest NASA energy budget as shown graphically uses the same numbers as the Kiehl-Trenberth model with minor updated numbers. The Kiehl-Trenberth model is the standard reference for energy flows as shown in the IPCC (reference 2) reports. This model is severely constrained by the need to apply the Stefan-Boltzmann constant to radiation being emitted from the surface of the earth. That amount of radiation is stupendously ridiculous. It shows as much radiation being given off by the cold surface of the earth as a white hot light bulb, which is stated to be 79% of the energy leaving the surface of the earth. This number shows how far off the Stefan-Boltzmann constant is. There should be 1-3% of the energy leaving the surface of the earth in the form of radiation, the rest leaving as conduction, convection and evaporation. If the real amount is 2%, it shows the Stefan-Boltzmann constant to be off by a factor of 40. The Kiehl-Trenberth model was forced to show such ridiculously high radiation, because the numbers would not fit into the equations with less radiation due to a dependence upon the Stefan-Boltzmann constant. Recent NASA Energy Budget

This model shows 398.2 watts per square meter of radiation being emitted from the surface of the earth. Conduction and convection are said to be 18.4 w/m˛. Evaporation is said to be 86.4 w/m˛. 398.2 ÷ (398.2 + 18.4 + 86.4) = 79% radiation. Earlier NASA Energy Budget

For several years, NASA used this earlier energy budget, which shows 41% of the energy leaving the surface of the earth to be in the form of radiation. It shows radiation leaving the surface of the earth as 15% absorbed into the atmosphere and 6% going into space, which is a total of 21%. It shows 7% for conduction and convection and 23% for evaporation. 21% ÷ (21% + 7% + 23% = 41% radiation. Why did NASA show 41% radiation for several years? It looks like guess-work. Eventually, they had to conform to the Kiehl-Trenberth model, since it is in the IPCC reports, and the Stefan-Boltzmann constant leaves little room for variation on that number. Why should the amount of radiation leaving the surface of the earth be 1-3%? Fans would not be used for cooling if radiation were a significant method of getting rid of heat. The earth's surface has a constant breeze blowing over it on average. With a rough surface and a breeze, almost all heat loss will be due to conduction, convection and evaporation. Emissivity Omitted In addition to the absurdly high radiation required by the Stefan-Boltzmann constant, the 390 W/m˛ (revised to 398.2) radiation leaving from the surface of the earth is supposed to be adjusted for emissivity, which is now days said to be 0.64 for the earth’s surface. This means 0.64 times 398.2, which equals 255 W/m˛ instead of 398.2 W/m˛. Yet the recently produced NASA energy budget continues to show the same numbers as the Kiehl-Trenberth model. Presumably, when the Kiehl-Trenberth model was produced in 1997, a number did not exist for the emissivity of the earth’s surface, so it was omitted. Later, the model by NASA reduced the radiation from 79% to 41%, presumably attempting to make it look more credible. But by then, the Kiehl-Trenberth number had been enshrined in several editions of the IPCC reports, so NASA apparently felt maintaining the same number would be less incriminating than reducing it to almost one half. And still, emissivity was not used to reduce the number to 255 W/m˛, which shows that a consistent absurdity was more important to them than correct scientific procedures. This is the Kiehl-Trenberth model (reference 3). (Jeffrey T. Kiehl, Kevin Trenberth (1997): Earth’s Annual Global Mean Energy Budget; in Bulletin of the American Meteorological Society, Vol. 78, No. 2/1997, S. 197-208) The Stefan-Boltzmann constant is this: W/m˛ = 5.67051 x 10-8 x K4 With the Stephan-Boltzmann constant, the surface of the earth must be giving off 390 watts per square meter of radiation at its average temperature of 15°C

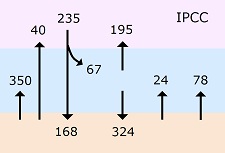

Radiation from sun onto earth's surface: 235 - 67 = 168 W/m˛. Radiation from atmosphere to earth: 324 W/m˛. Total on earth's surface: 324 + 168 = 492 W/m˛. From surface by conduction (air rising): 24 W/m˛. From surface by evaporation: 78 W/m˛. From surface as radiation: 390 W/m˛. Of the 390 W/m˛: Net radiation from surface to atmosphere: 350 - 324 = 26 W/m˛. Net energy from surface to atmosphere: 24 + 78 + 26 = 128 W/m˛. From sun to atmosphere: 67 W/m˛. Emitted from atmosphere to space: 128 + 67 = 195 W/m˛. Total into space: 195 + 40 = 235 W/m˛. Alarmist climatologists use this procedure to show that the numbers can be balanced when using the Stefan-Boltzmann constant, and the greenhouse effect is supposed to be a necessary method of getting the surface temperature up from the -18°C which liberates 235 W/m˛, based on the Stefan-Boltzmann constant, to 15°C. There are two major problems visible in the numbers shown. One is that only 24 W/m˛ leave the surface as conduction, with 390 W/m˛ leaving as radiation. That's 16 times as much radiation as conduction. Nothing resembling that happens below the temperature of white hot metals. Those numbers were forced into the method because of preposterously high radiation indicated by Stefan-Boltzmann constant. To account for the extremely high radiation indicated by the Stefan-Boltzmann constant, there had to be a lot of radiation interacting with the earth's surface—specifically 324 W/m˛ going from the atmosphere back to the surface. This amount left almost nothing for conduction, convection and evaporation. The 390 W/m˛ being emitted from the surface included 40 W/m˛ going into space and 350 W/m˛ going into the atmosphere. The 324 W/m˛ coming back out of the atmosphere and onto the surface had to be less than the 350 W/m˛ going in. The 324 W/m˛ left almost no space for conduction, convection and evaporation, because most of it had to be used to create the 390 W/m˛. Balancing ridiculous numbers was more important to alarmist climatologists than a credible logic. Throughout the global warming issue, logic is sacrificed to absurd claims and fake mathematics including falsified data. It also means physicists made up the Stefan-Boltzmann constant off the top of their heads with no relationship to objective reality. It rationalizes fake math and numbers for greenhouse gases, which requires a lot of radiation, but contradicts logic and evidence. The number one claim produced by nonscientists, particularly bureaucratic authorities, is that greenhouse gases supposedly heated the planet by 33°C. This number, or a description of it, is at the top of nearly every web site on the dangers of "climate change," which nearly every state and local government maintains. The derivation of this number is disgusting; yet it is repeated by some Ph.Ds. and presumably derived by Ph.Ds. This claim convinces nonscientists that global warming is occurring and of much importance. Until nonscientist activists can get beyond this assumption, they can't be told anything more about global warming. The stupidity of it is the assumption that radiation is the only way heat gets into the atmosphere. Most heat gets into the atmosphere through conduction, convection and evaporation, not radiation. Conduction and evaporation must at least reduce the 33°C to a smaller number. Additional factors make it a lot smaller number, even for most climatologists. But the problem is not just the size of the number, the problem is that there is a lot more to the subject than nonscientists assume; but they have no reason to consider any of it, when 33°C tells them the whole story. This is why almost nothing else is mentioned on government web sites. Once you see the 33°C, there is nothing more you need to know about global warming. The sun puts an average of 235 watts per square meter of energy onto the earth. The Stefan-Boltzmann constant says matter emits this amount of radiation at a temperature of -18°C. But with an atmosphere the global average temperature near the earth's surface is 15°C, which is 33°C higher. Therefore, greenhouse gases supposedly heated the earth by 33°C. They missed the conduction, convection and evaporation which heat the atmosphere without greenhouse gases influencing the result. No real scientists could miss the conduction, convection and evaporation. But a few supposed scientists feed this error to nonscientists as their most convincing point. Here's how this subject was explained for several years on the web site of the Union of Concerned Scientists (removed later). It says this: "The 'greenhouse effect' refers to the natural phenomenon that keeps the Earth in a temperature range that allows life to flourish. The sun's enormous energy warms the Earth's surface and its atmosphere. As this energy radiates back toward space as heat, a portion is absorbed by a delicate balance of heat-trapping gases in the atmosphere —among them carbon dioxide and methane—which creates an insulating layer. With the temperature control of the greenhouse effect, the Earth has an average surface temperature of 59°F (15°C). Without it, the average surface temperature would be 0°F (-18°C)... Scientists have concluded that human activities are contributing to global warming by adding large amounts of heat-trapping gases to the atmosphere...we release carbon dioxide and other heat-trapping gases into the air." Were greenhouse gases allowing the Cambrian Explosion of Life to occur 543 million years ago when there was 20 times as much CO2 in the air? If so, humans must be doing wonders for life. What is delicate about 20 times as much CO2 in the air when modern photosynthesis evolved? Climatologists cannot explain a mechanism for global warming. Instead they use fake numbers, such as 3.7 watts per square meter to represent heat trapped in the atmosphere due to human activity. There are no square meters in the atmosphere. It's strange that radiation was calculated, when there is no method of converting radiation into temperature. Temperatures have to be measured, they cannot be calculated. The reason is because infinite complexities related to rates of heat loss and gain determine temperature. For routine events, engineers will set up a model system to determine expected results through trial-and-error. Atmospheric change is not that sort of routine event. Radiative transfer equations were used to supposedly show 3.7 watts per square meter less radiation leaving the planet than entering from the sun upon doubling CO2. There can never be a difference between energy inflow and outflow beyond minor transitions because of equilibrium. The disequilibrium is only applied to human activity, as if nothing else in 4 billion years of climatology including volcanoes putting CO2 in the air created disequilibrium. With fake science, no two climatologists can agree upon much. So various explanations exist for the 3.7 w/m˛. Some say, it pushes the equilibrium temperature upward. But there is no mechanism for doing so. Watts per square meter represent a rate of energy addition, which does not produce a stable temperature without an equal rate of subtraction. There would have to be two calculations to include both the addition and subtraction. This rate of energy addition to the atmosphere (3.7 W/m˛) is supposed to result in 1°C near-surface temperature increase as the primary effect by CO2. The 1°C was supposedly produced by reversing the Stefan-Boltzmann constant, but reversing that constant is not valid, because it only applies to surfaces. Secondary effects supposedly triple the 1°C to 3°C. To model temperature increase due to CO2, a primary effect is the starting point, and then secondary (feedback) effects are added. The primary effect is described as "radiative forcing due to CO2 without feedback," which is the fudge factor, and this is converted to a temperature with a "conversion factor." The result is said to be 1°C for doubling CO2 in the atmosphere. The simple math is this: The primary effect (fudge factor) is this: 5.35ln2 = 3.7 W/m˛ = 1°C. The question is, what happens upon doubling the amount of CO2 in the air. For doubling CO2, the ratio of C/C0 5.35ln2 = 3.708 W/m˛ This little calculation is promoted as an unquestionable law of physics upon which all else in climatology is based. It is the primary effect from which secondary effects are modeled. Only the secondary effects are in question. The calculation is total fakery. What this means is that climatologists claim that the primary effect upon doubling carbon dioxide in the atmosphere will heat the atmosphere by 1°C, and then modeling is used to determine secondary effects, which supposedly add 2°C for a total of about 3°C. The primary effect is not questioned, supposedly being an absolute law of physics, and only the secondary effects are studied and argued. Climatologists convert the 3.7 W/m˛ into 1°C by reversing the Stefan-Boltzmann constant; but reversing that constant is not valid, because it only applies to surfaces. Here's how the Stefan-Boltzmann constant is reversed: The SBC is this: W/m˛ = 5.670373 x 10-8 x K4 The global average, near-surface temperature is said to be 15°C. Average emissivity is said to be 0.64. The desired end-point of 1°C is plugged in going from the global average temperature of 15°C to 16°C. The end result is 3.486 W/m˛, and the reversal shows that it produces 1.064°C temperature increase.

3.708/3.486 = 1.064°C The result is the desired 1°C for the primary effect of doubling CO2 in the atmosphere, as if climatologists could calculate such things with extreme precision. They claim about 1% error on this calculation. What this shows is that climatologists started at the desired end-point of 1°C for near-surface temperature increase upon doubling CO2 in the atmosphere and faked the fudge factor through obscure radiative transfer equations which could only be produced using the world's largest computers eliminating any possibility of critics reproducing their results. The initial concept of global warming was that more carbon dioxide in the atmosphere would absorb more radiation and heat the atmosphere. Scientists then found that laboratory tests were not showing an increase in radiation absorption with an increase in CO2 for a very simple reason: A very small amount of CO2 absorbs all radiation available to it in a short distance. Adding more CO2 only shortened the distance required for absorbing all of the radiation. Climatologists refer to this concept as "saturation."

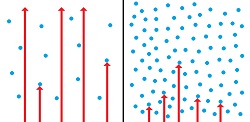

But hold-outs were sure global warming must be caused by increases in CO2 and looked for explanations. During the seventies, as computers became available, complex modeling was used to show heating of the atmosphere upon increases in CO2. In 1979, a quasi governmental office created a study group to clarify the climate influences of carbon dioxide. The result was a publication by Charney et al, 1979 (reference 4), who used modeling of atmospheric effects. Their conclusion was that a doubling of CO2 in the atmosphere would result in a temperature increase of 3°C. The claims were total fakery. Scientists do not have the slightest ability to convert the details, complexities and randomness of the atmosphere to measurement or calculation, which is why weathermen cannot predict more than a few days for simple elements such as temperature and precipitation. Charney et al claimed to model such things as "horizontally diffusive heat exchange" and "heat balance." The terms used are nothing but word salad. There is no such thing as horizontally diffusive heat exchange in the atmosphere or oceans. In large fluids, diffusion would cover no more than a few nanometers before convection renders it irrelevant. Why add "heat exchange?" There needs to be two mediums with an interface for heat exchange. If atmosphere and oceans were the interface, there is no "horizontally diffusive" element to it. Diffusion is a chemistry concept, not an energy concept. Heat moves through conduction, not diffusion. There is also no such thing as "heat balance." Heat migrates and transforms to and from other forms of energy. There is nothing balanced about it. To model heat through the atmosphere resulting from carbon dioxide, the starting point must be some quantity of heat which is supposed to be moving through the atmosphere. Yet that quantity was the end result of the Charney study rather than the starting point. Numerous other studies used the same basic modeling concepts. In 1984 and 1988, Hansen et al (references 5 & 6) used similar modeling but started with a concept of how much heat carbon dioxide should produce determined as "empirical observation," by which they meant the assumed historical record of carbon dioxide heating the atmosphere. The assumed historical record is that humans increased the amount of carbon dioxide in the air by 100 parts per million (ppm) (280-380 ppm) when the first 0.6°C temperature increase occurred in the near-surface atmosphere. Modeling then had the purpose of showing complex future temperatures. Implicitly, the atmosphere would add secondary effects to the primary effect of carbon dioxide. But the historical record included the secondary effects, which means the secondary effects were compounded. In other words, there is no clear concept of a purpose or a logical set of cause-and-effect relationships. Yet Hansen et al arrived at approximately the same conclusion as Charney et al—that the expected temperature increase upon doubling the amount of CO2 in the atmosphere would be about 3°C. This result is always given for numerous such studies with widely varying procedures, which shows that it is nothing but a contrived end result with nothing but fakery for a method of deriving it. How could the same number be produced with and without a starting point for the amount of heat CO2 supposedly produces based on the historical record? The implication is that all research on this question used the exact same procedures and got the same results. But the publications are not produced or described the same way, and procedures and technology seem to have been evolving. There wasn't much need for improvement when they always got the same results. The reason for the invariable 3°C increase upon doubling CO2 is that journalists said they would not be concerned unless the temperature increase would be 3°C. Otherwise, why not just use the historical record? If it is extended, it would indicate a temperature in crease of 1.7°C upon doubling CO2 in the atmosphere. (280/100 x 0.6 = 1.7) To get some other number than 1.7°C upon doubling CO2 is to say the atmosphere is going to do something different than it did in the past. There is no explanation of why it should. In 1998, Myhre et al (reference 7) did "radiative transfer equations," which supposedly defined the energy increase due to CO2 with extreme precision, which required the world's largest computers. They claimed that the primary effect by CO2 causes 3.7 watts per square meter of energy to accumulate in the atmosphere upon doubling the amount of CO2. One of the absurdities is that there is no accumulation of energy in the atmosphere, as equilibrium requires the average amount of radiation leaving to equal the amount coming in from the sun. Radiative transfer equations also remove the saturation problem. Saturation can be determined in a laboratory in a few minutes. It leads other scientists to conclude that no greenhouse effect can occur for this reason along. Heinz Hug did such a measurement (reference 8) and said all relevant radiation is absorbed by the time it travels 10 meters in the atmosphere. He wasn't allowed to publish such significant criticism, but he put it on the internet. It means the radiative transfer equations made saturation disappear. The second law of thermodynamics says heat dissipates, always, without exception. Here's an explanation of how heat dissipates in the atmosphere upon absorbing radiation. The relationship between radiation and temperature cannot be calculated due to infinite complexities. One of the problems is that radiation being absorbed by a molecule is partially transferred to other molecules through conduction and partially re-emitted at black body wavelengths. How the energy is distributed by conduction before being re-emitted determines the temperature increase. No theory can say how the energy is distributed. This image shows how energy is re-distributed when radiation is absorbed by carbon dioxide in the atmosphere.

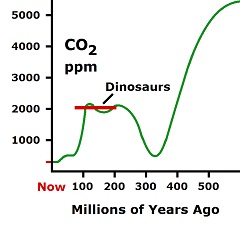

When a molecule of CO2 in the atmosphere absorbs fingerprint radiation (the only thing in question) it increases in vibratory motion, which is heat. As it bumps into surrounding molecules (mostly nitrogen gas), it imparts some motion, which reduces its own motion, while increasing the motion of the other molecule. This bumping goes from molecule to molecule, as the energy spreads through the atmosphere. The vibrating motion of molecules sends out waves of infrared radiation. As the molecular motion decreases, the intensity of the radiation and its frequency get lower. If the average wavelength of emission is 25 microns, there are 83 femto seconds for each initial bump. (frequency equals velocity over wavelength. Time equals inverse of frequency) (3x108 ÷ 25x10-6 = 12x1012, inverse = 83x10-15). The number of waves or bumps required to liberate absorbed radiation depends upon the relative temperatures of emitting and absorbing molecules. From a hot surface to a cold atmosphere, strong radiation is absorbed by CO2, and weak radiation is emitted. But most radiation travels less than 10 meters, because the saturation distance is ten meters at sea level air pressure. For this, weak radiation is emitted, absorbed and re-emitted. With weak radiation, five bumps or waves should dissipate the energy absorbed by one wave. The emitting radiation is in fact stronger than the absorbed radiation, because radiation emitted is black body radiation, which means wide bandwidth, while absorbed radiation by CO2 is fingerprint radiation, which is narrow bandwidth. But more than one wave emitted per wave absorbed would be required, because energy is being imparted to surrounding molecules through collisions. Those molecules also emit radiation, which means a few bumps should send the added radiation out. At 5 bumps (two directional) or waves and 83 femto seconds per cycle, the energy radiates away in 415 femto seconds, which is about a half of a pico second. A hundred bumps would give up half of the energy in 8.3 pico seconds. No one knows exactly how the energy disperses through the surrounding environment. So no one knows how much energy is retained before it is radiated out again. Each of the molecules which receive energy will emit some outflowing radiation, but how much and when cannot be determined. So no one knows how much temperature increase occurs, but it is miniscule to a point of irrelevant when one CO2 molecule out of 2,500 air molecules is doing the absorbing, and the rest are emitting. Of course, most of the heat enters the atmosphere through conduction and evaporation, and that heat is emitted through radiation also. One of the most ridiculous things about the concept of global warming is the assumption that there is a "delicately balanced" which humans are upsetting. There is a ridiculous concept of balance with global warming, where the cumulative effects reach just the right quantities for survival. This result is supposed to be somewhere between 180 and 280 parts per million carbon dioxide in the air. For a billion years? How absurd can anyone be. There was five times as much carbon dioxide in the air during dinosaur years, and twenty times as much when modern photosynthesis began. How many species can live on one twentieth as much food as they evolved on?

All biology is on the verge of becoming extinct due to a shortage of carbon dioxide in the air, which is needed for photosynthesis. Oceans absorb carbon dioxide and convert it into calcium carbonate and limestone. As a result, carbon dioxide almost disappeared from the atmosphere 300 million years ago. In the nick of time, the level bounced back to the amount during the dinosaur years. The bounce-back would have been due to volcanoes, as tectonic plates started moving around and separating at that time. Tectonic plates continually get thicker as the planet cools. They were just getting thick enough to do things during the dinosaur years. Three hundred million years ago is also when conifers evolved. They have needley leaves as a method of maximizing surface area for absorbing carbon dioxide in low supply. The pointedness of the needles also reduces the tendency of dinosaurs to bite into them, though the wood would have been more relevant. Dinosaurs ate nonwoody plants. The claim of oceans acidifying due to the absorption of carbon dioxide is nothing but more fakery. No one has ever found any other pH than 8.1 in the oceans beyond isolated environments such as estuaries. The claims otherwise are all predictions, projections, aquarium tests, etc., at least as they leave the science domain; and then nonscientists take it from there and create everything up to the green Martian disease with it. The reason why the ocean pH never varies is because it is buffered by calcium, which combines with the carbon dioxide to form carbonate. The calcium never runs out, so the ocean pH never varies. All major global effects equilibrate. This means they change until they cannot change further in their interactions. These equilibrium forces are large, and they established themselves billions of years ago. For this reason, the temperature of the globe is in equilibrium with the energy being absorbed by the sun. Equilibrium is not a delicate balance. Simple math shows that hurricanes are not caused or influenced by human-induced global warming. The atmosphere would have to cool 55°C to heat the surface of the oceans 2°C. If all 0.7°C heating said to be caused by humans went into the surface of the oceans to a depth of 100 meters, the increase in temperature would be 0.025°C, and none would be left in the air. Scientists keep saying that hurricanes are not caused by global warming. Activists keep saying they are. The promoters of causes don't let scientists get in their way. The fact that there have been few hurricanes over the past twenty years shows the truth: The claimed heating caused by humans has not been creating hurricanes. On top of that, simple math shows why. The atmosphere would have to lose 55°C to heat the top 100 meters of the ocean 2°C. Logic indicates that at least a 2°C temperature increase on the surface of the oceans would be needed to increase hurricanes significantly. This result, which activists don't realize, is due to air having very low heat capacity, while water has a high heat capacity. It means the heat gets drawn out of air without doing much to the oceans it enters. Melting Arctic ice with air is even more ridiculous, as ice requires 80 times as much heat as warming water requires. Calculating Ocean Heat The oceans cover 361 x 106 square kilometers (km˛) of the earth's surface. A depth of 100 meters produces 0.1 times that many cubic kilometers, which is 361 x 105 kmł. At a billion cubic meters per cubic kilometer, the water consists of 361 x 1014 cubic meters. At 1025 kg/mł, the sea water is 3.7 x 1019 kilograms. At a specific heat of 3850 joules per kg per °C or 7700 per 2°C, it takes 2.85 x 1023 joules of heat to heat that much water 2°C. Calculating Atmospheric Heat The mass of the atmosphere is 5.15 x 1018 kg. At a specific heat for air of 1,000 joules per kilogram per degree centigrade, there will be 5.15 x 1021 joules per °C of heating. Dividing the number of joules required to heat the water by the number of joules required in the air equals 55°C of air temperature reduction. It means the air would have to lose 55 degrees centigrade to heat the oceans 2°C to a depth of 100 meters. The total heating caused by humans was only supposed to be 0.7°C so far. And activists don't need scientists to tell them global warming is not causing hurricanes. Yet any number of fake scientists are saying the oceans are heating, and Arctic ice is melting due to global warming. Most persons don't believe scientists can be that wrong. Too many scientists are, because too many of them are just like the activists—they don't have a clue as to what they are doing, and they move words around as if it were science. What the Claimed Human Effect of 0.7°C Does Supposedly, 0.7°C is the amount of heating of the near surface atmosphere that humans caused with greenhouse gases. What if all that heat went into the top 100 meters of the oceans. More absurdly, how can it still be there if it went into the oceans? From above, it was noted that the atmosphere will produce 5.15 x 1021 joules per °C of heating. At 0.7°C, multiply this times 0.7, and it is 3.6 x 1021 joules. It was noted above that the amount of water of concern weighs 3.7 x 1019 kg. Multiplying times the specific heat of 3850 joules per kg per °C equals 1.4 x 1023 joules per degree centigrade. Dividing this into the 3.6 x 1021 joules above yields 0.025°C. This is how much the oceans would be heated to a depth of 100 meters if all heat supposedly produced by humans went into the oceans: 0.025°C. And then there would be none in the air. It shows that greenhouse gases would be irrelevant even if everything said about them were true. There is almost no there there in terms of joules compared to everything else. Surfaces control near-surface temperatures. Melting polar ice with air is even more ridiculous, because melting ice requires a lot of heat, called heat of fusion, which is 334 kJ/kg (kilojoules per kilogram). Each cubic meter of ice melted would require 261,000 mł of air losing 1°C (334,000÷1.28=261,000). A cubic meter of water or ice is about 1,000 kg. Melting requires 334 kJ/kg. Combined, it's 334,000 kJ/mł. The specific heat of air is 1kJ/kg with a density of 1.28 kg/mł at 0°C. This number can be divided by the height of the atmosphere, which is equivalent to 5km at normal pressure, and it is 52 atmospheres of height above the ice. (261,000÷5,000=52). That's for one meter of ice depth and 1°C of global warming. If the ice is 10 meters thick, 520 atmospheres above it would be required to hold enough heat to melt it. Of course the air would not circulate well enough at more than a few kilometers of height. What really happens is that the air above polar ice rapidly becomes the same temperature as the ice, and nothing melts. It takes warm ocean currents to melt polar ice. The melting that has been occurring at the North Pole results from warm Pacific Ocean water flowing over the Bering Strait and into the North Pole area. Ice at the South Pole keeps getting thicker, because it sits over land. Warming ocean currents put more moisture in the air which adds snow inland over Antarctica. Around the edges, a small amount of ice melts due to warming ocean currents. Why ocean currents warm and cool, no one knows, except that ocean temperatures slowly increase between ice ages, and oceans are extremely heterogeneous for temperature. The glaciers on mountains are totally irrelevant, because they are usually too small. Only the Himalayas are large, and they are not melting, because they are too high to be reached by warm air currents. The low level ice melted shortly after the last ice age. The edges of the mountain glaciers constantly increase and decrease for random reasons. This effect was shown by the "iceman" found in the Alps after some ice melted. He died there about 5,000 years ago. This means there was no ice where he was at about 5,000 years ago, then ice covered over him, and then the ice melted again a few years ago. Such ice melting and reforming has nothing to do with greenhouse gases. Oceans increase in temperature continually between ice ages. Oceans are extremely heterogeneous with rivers and mountains of cold and warm water, because heat is stored in the oceans and doesn't release easily. To then assign a number for human influence is total contrivance. One of the points being missed is that oceans are continuously heating, because they trap energy from the sun and a small amount from geothermal energy. Only ice ages cool the oceans back down. A large part of the change that is occurring is due to oceans continually heating.

This graph is a proxy measurement of ocean temperatures using sea shell analysis. Each peak is an ice age. The past few ice ages have been occurring at exactly 100 thousand year intervals. My impression is that the jagged edges on the lines of the temperature graph are largely due to variations in solar influences, but they cannot create an ice age, because ocean temperatures must heat up substantially, particularly around the Arctic, to put enough moisture in the air to cause more snow to accumulate than can melt.

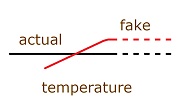

Temperatures stopped rising, because measurements are fake, and they can't be faked upward any farther. Some of the procedures which show a false increase could only be done once, such as eliminating colder stations, and they cannot be carried further. Alteration of measurements is never valid in science. Altered measurements are not reproducible measurements. Science isn't someone's irreproducible wisdom coming out of a dark hole. If it can't be verified, it's garbage. Global warming shows why.

The biggest concern with global warming over the past few years is why near-surface atmospheric temperatures have not been increasing significantly since 1998. Since temperatures never were increasing, why did the fakery stop in 1998? The obvious answer is that the fakery cannot be carried further. The discrepancy with actual measurements is getting too extreme to keep adding fake excuses for alterations of data. After the email scandal, commonly referred to as climategate, which occurred in 2009, critics looked into temperature measurements, and everywhere they looked they saw tampering which created a temperature increase where raw data showed none. Earlier measurements were being lowered, and recent measurements were being increased to show an upward curve. The explanation given was that earlier measurements were made in the afternoons, and later, the time of measurement was switched to mornings. So a correction was made by lowering earlier measurements. Never do weather stations make a single measurement per day. A temperature has no meaning apart from the highs and lows. It takes more than one measurement to determine highs and lows. Only highs and lows have been recorded for at least as long as fake global warming was measured. An issue which has actually been argued for quite awhile is the "urban heat island effect." It means temperature recordings are getting warmer due to development around the measuring stations. To compensate for the result, temperature measurements need to be lowered, and disputes arise over how much. But then is the argument goes the wrong way. Recorded temperature measurements need to be lowered, not increased, where there is a heat island effect. Satellite measurements showed no significant increase since they began in the seventies, but satellite results were required to be altered to align with the fake land measurements. And then the claim is that there are problems that need to be corrected with land based measurements. Maybe the satellite measurements should have been the determining influence, but the fakes cannot live with the result of the satellite measurements. Very little of the earth's surface can actually be measured through weather stations. The gaps are filled in through contrivance, as indicated by the exposed emails called "climategate." The claimed global average temperature changes are totally contrived. So a study was conducted by meteorologists in 2010 finding these results: The TV Station KUSI in San Diego stated it this way: "Skeptical climate researchers have discovered extensive manipulation of the data within the U.S. Government's two primary climate centers: the National Climate Data Center (NCDC) in Asheville, North Carolina and the NASA Goddard Institute for Space Studies (GISS) at Columbia University in New York City. These centers are being accused of creating a strong bias toward warmer temperatures through a system that dramatically trimmed the number and cherry-picked the locations of weather observation stations they use to produce the data set on which temperature record reports are based." Renowned meteorologist Joseph D'Aleo stated it this way: "NOAA is seriously complicit in data manipulation and fraud. ..."NOAA appears to play a key role as a data gatherer/gatekeeper for the global data centers at NASA and CRU." D'Aleo and Anthony Watts drew these conclusions in their study (reference 9): "The startling conclusion that we cannot tell whether there was any significant “global warming” at all in the 20th century is based on numerous astonishing examples of manipulation and exaggeration of the true level and rate of “global warming”. That is to say, leading meteorological institutions in the USA and around the world have so systematically tampered with instrumental temperature data that it cannot be safely said that there has been any significant net “global warming” in the 20th century." Itemizing conclusions, they stated: 1. Instrumental temperature data for the pre-satellite era (1850-1980) have been so widely, systematically, and unidirectionally tampered with that it cannot be credibly asserted there has been any significant “global warming” in the 20th century. This was January 23, 2010. Not a word of it was seen or heard by most persons, while the charade of climate change goes on, and critics are excluded from the media under the pretense of protecting the truth from contamination. Real truth is not produced through the safe keeping of the wise. Real truth is strengthened through criticism. Only fraud needs to be sheltered from criticism. Climatologists claim warming by a greenhouse gas results in secondary effects, mostly due to increased water vapor, and these effects are twice as large as the primary effect. Since there is no real primary effect, there are no secondary effects. Secondary effects are not measured; they are theorized. The theory would be ridiculous even if there were a primary effect. Natural variations in temperature are extreme. If they were producing twice as much secondary effect, everything would be frying. A second absurdity is that water vapor in the air is not determined by air temperature but by ocean temperature. The third absurdity is that any secondary heating which is greater than the primary heating would produce hysteresis, which means thermal runaway. The claim that secondary effects produce most of the global warming resulted from a need to increase the 1°C supposedly caused by carbon dioxide into a 3°C effect; so 2°C was tacked on as a secondary effect. The assumption was that the historical record was indicating that if CO2 in the atmosphere were doubled, an increase in temperature of 1°C would occur. But critics were saying the increase would have to be 3°C before they would be concerned. And abracadabra, another 2°C showed up. It was said to be a secondary effect. The historical record included secondary effects. So there was an inherent contradiction in adding another 2°C as a secondary effect (called feedback). It was rationalism in contempt for facts and logic. From season to season, temperatures typically vary by 25-40°C. If 1°C triples to 3°C, why does not 25°C triple to 75°C? Perhaps only long term averages are relevant; but not quite. Temperature changes do what they do in hours for daily variations and weeks for seasonal variations. The lag is due to heat stored in solid earth or ocean water. If the secondary effects are primarily due to water being vaporized, as claimed, dry air should be producing a lot less heat than humid air, like maybe one third as much heat. But we see the opposite, as desert air is the hottest, and ocean air is the coolest. The claim is that global warming due to carbon dioxide increases the holding capacity of the atmosphere for water vapor, and water vapor is said to be something like 100 times as strong of a greenhouse gas as carbon dioxide. (Numbers are hard to pin down, since there is no objective reality to it.) Extending from that starting point, increased holding capacity is said to result in increased water vapor. Specifics are non-existent, as the modeling is never published beyond the equivalent of a news blurb. Climatologists err in claiming the amount of water vapor in the air is determined by holding capacity. If so, the air would always tend toward saturation. No one knows what the global average humidity is, but a usual guess is somewhere in the vicinity of half saturation. Saturation is typically 3% for warm air, so an average is usually considered to be 1-1.5%. Do the modelers have a better number? Without it, how can their results have less than about 50% error. They used to claim 15% error, but now they give a range with various degrees of certainty. (The errors are additive for hundreds of effects which they claim to model.) Humidity is primarily determined by ocean temperatures. Air gets dryer the farther it gets from the oceans. Cooling draws moisture out of the air by creating precipitation, which includes uprising over mountains. Changes in holding capacity due to supposed effects of greenhouse gases will not occur over oceans, as air temperature over oceans equilibrates with the surface temperature of the oceans. This means greenhouse gases will not determine how much moisture enters the air—the essence of the claimed secondary effect. The forces which remove moisture from the air would also swamp supposed effects by greenhouse gases. Simple changes in temperature do not remove much moisture from the air, only precipitation conditions do. Precipitation conditions involve dramatic effects including lower air pressure and collisions with cold fronts. The process of precipitation then releases massive amounts of heat—as much heat as absorbed in the evaporation which put the moisture in the air. A 1°C global air temperature increase would disappear in such major forces associated with precipitation conditions. But climatologists supposedly have the effect calculated and modeled over the next hundred years. Weathermen can't say much about it for more than a few days. Why don't climatologists reveal their superior predictive abilities to the weathermen? When they publish nothing more than a summary and number, they produced nothing more than a summary and number. They start at the endpoint and juggle numbers to get there. One of the basic pretenses of physicists including climatologists is that they can read any effect through any amount of noise. They are dealing with miniscule effects, which they supposedly can calculate in disregard to major effects which overwhelm their process. Miniscule effects do not survive major opposing forces. Hysteresis If 1°C caused 2°C additional increase due to feedback, the additional 2°C would cause another 4°C increase, and these increases would keep compounding. But the claim is that the secondary increase due to water vapor feedback cannot increase more than 2°C. Putting a cap on feedback or secondary effects is an impossibility and contrivance. The claimed maximum would have been reached long ago due to the compounding effect, and no further increase would be possible at this time, if there is a 2°C cap on it. There is no concept of why the cap would be any different now than in earlier times. Why would the cap have changed now due to human activities? This concept is nonsensical. Putting a 2°C cap on secondary effects is an oxymoron or self-contradiction, because nothing can have two temperatures simultaneously. A cap says 2°C above some temperature, but the starting point disappears due to the secondary effect. The effect would have to be a force for increase, which could not have a cap on it. Putting a cap on feedback is assuming and implying that there was a normalcy that was stable before humans influenced the result. Only nonscientists are supposed to be that ignorant of science, but supposed scientists make such claims. Temperatures change 1°C about every 100 miles north or south. When and where is the temperature 1°C above normal and creating the 2°C feedback? There is no such thing as a normal starting point, so there is no such thing as a feedback increase. The effect of a feedback increase is called hysteresis. It's a force, not a number. In electronics, hysteresis is caused by positive feedback from the output of an amplifier to the noninverting input. It causes the output to go rapidly to one of the voltage rails. A form of this is used for digital outputs, because it locks rapidly at either the plus or minus rail with nothing in between. The input must cross a threshold voltage to change the output. There is no rail or maximum for temperatures. If temperatures are limited for some reason, that limit cannot be set by some hypothetical starting point, such as a 2°C cap above a hypothetical primary effect. There is no such temperature as 2°C above itself. In other words, an upper limit for temperature would have to be determined by some external requirements, not a hypothetical starting point called a primary effect. If a secondary effect can influence itself, it creates a dramatic event. An example is a nuclear reaction. In electronics, thermal runaway is such an example. It burns up components. Combustion is another example. In the atmosphere, no such events have ever occurred, not the least reason being that nothing in the atmosphere can cause heat to generate more heat than it started with, as falsely claimed for greenhouse gases. The concept of secondary effects is an absurdity patched into the analysis for the purpose of showing more heating than could be attributed to carbon dioxide alone. The pretense of humans upsetting a delicate balance was dependent upon creating the impression in the minds of the public that nature was forever the way it is now. The "hockey stick graph" had that purpose. It needed a straight handling showing the invariability of nature followed by an upward spike showing the dastardliness of human activity.