It's the quantities which create the absurdity of upper atmosphere claims.  Miniscule amounts of radiation or heat are involved, as most of the radiation which cools the planet leaves from the warmest areas, which means the near-surface troposphere. With miniscule effects high in the atmosphere, the temperature increase would have to be approximately 79°C to create 1°C temperature increase in the near-surface atmosphere. No temperature increase has ever been detected high in the atmosphere due to increased CO2.

Miniscule amounts of radiation or heat are involved, as most of the radiation which cools the planet leaves from the warmest areas, which means the near-surface troposphere. With miniscule effects high in the atmosphere, the temperature increase would have to be approximately 79°C to create 1°C temperature increase in the near-surface atmosphere. No temperature increase has ever been detected high in the atmosphere due to increased CO2.

The 79°C increase is derived from these influences: A density of 30% requires a multiplying factor of 3.3. Radiating the heat downward would capture half of the heat (half going upward). A temperature of -43°C at 9 km would only radiate 40% as much based on the Stefan-Boltzmann constant. Only 30% of black body radiation goes around greenhouse gases and would get to the lower atmosphere. About 30% would be reflected due to sharp angles.

This amount assumes equal masses of air in both places. If they are not equal, some fraction is still an absurd amount of heat to be transferred. It's impossible to say whether the masses should be equal, because the effect does not exist.

It means a lot of radiation is doing something other than that, and the result is equilibrium uninfluenced by any possible effect by CO2 high in the atmosphere.

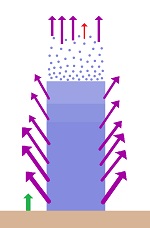

At the scientific level, what appears to be the prevailing claim is that increased amounts of CO2 will force radiation to escape from a higher and higher location in the atmosphere, which reduces the amount that can escape. Insulating that claim from everything else is absurd.